We tried to run a backup every day at 3am.

That’s it. That’s the whole requirement.

And yet… it turned into a small odyssey through MCP, OpenClaw cron payloads, hooks, heartbeat routing, and a few “this should work” moments that absolutely did not work.

If you’re thinking: “How can scheduling a backup be hard in 2026?”… I’m with you. I wanted to believe it too.

But that’s the trap: we treat automation platforms like magic. Like you toss a cron job into the air and the system will catch it, interpret it, execute it, and leave a neat audit trail behind.

Reality is less romantic, specially when your requirement comes with some constraints. Cron doesn’t run your intent. Cron runs a payload. If you don’t know what payload you’re sending and where it routes, you’re not building a pipeline, you’re whispering into a void.

A bit of context

As many of my readers know, I like experimenting, and my team and I have been playing a lot with Open Claw lately; so much so that we needed a way to schedule a full backup of it, in case “I” broke something (which happened a lot).

To make the experiment secure we used a very peculiar setup (blogpost on that coming next) and we needed the backup to run in a way that “it” (open claw) could “act” on the proccess using it’s own methods (e.g. an agent). One critical restriction is that the resulting compressed file must be encrypted but without Open Claw having the actual Key. This is because in case Open Claw gets compromised, it will only leak it’s current state and possibly an encrypted “history”. Pretty neat right? (another blogpost on that comming soon).

What we wanted (the honest spec)

A daily encrypted backup of the OpenClaw state:

- Run daily at 3am

- Encrypt the backup with a password retrieved securely via an MCP tool (so Open Claw will not have access to the encryption key,).

- Store backups under a pecific path

- Keep the last ‘n’ backups (delete older ones).

- Do this in a way we can control and debug from OpenClaw, not from the OS.

Simple. Reasonable. “Done in 10 minutes.”

Sure.

The system we had (and why it mattered)

We had two moving pieces:

- An MCP tool (

MCP_secure_backup) that:- Backs up a directory,

- Retrieves the encryption secret via a vault service behind a gateway

- Encrypts the file

- OpenClaw automation that can schedule things via

openclaw cron.

So the plan was obvious: schedule a cron job. Trigger the tool. Have Lunch.

The problem: OpenClaw cron supports more than one “kind” of scheduled execution, and they are not interchangeable. And this is the part that I initially missed.

Attempt #1: Cron in main session using a “system event”

We created a job like this:

- Target:

--session main - Payload:

--system-event "automation:secure-backup"

It ran. The run history said “ok”. The system printed the reminder.

And yet the backup didn’t happen.

Why? Because this payload is not “run code”, it’s a reminder delivered into the main session’s heartbeat routing path.

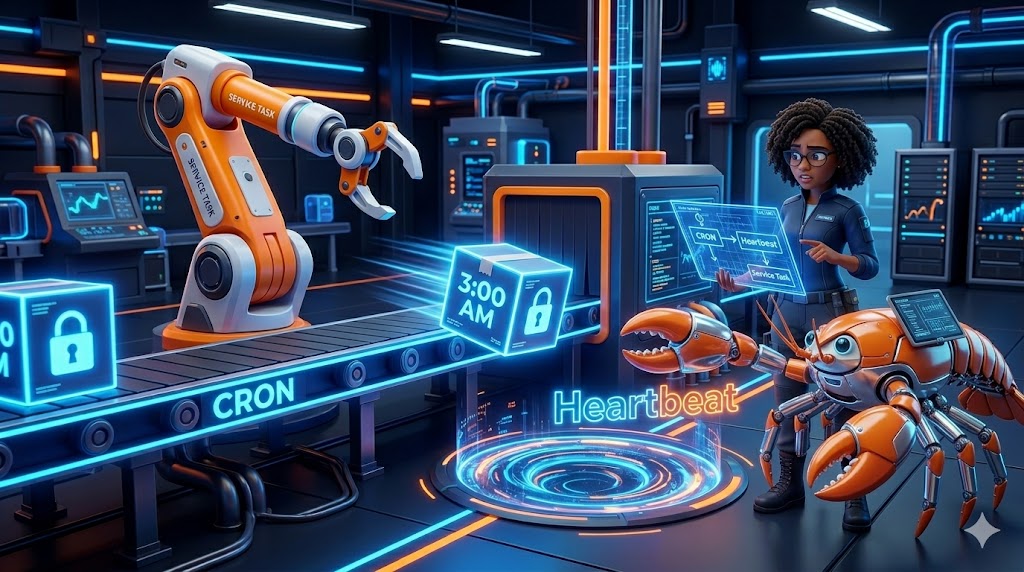

Think of it like this:

- We ordered the conveyor belt.

- We never installed the robot arm.

- The belt ran beautifully but nothing got picked up.

The docs actually said this, just not in the way my brain expected when I was in “ship mode”:

systemEvent: main-session only, routed through the heartbeat prompt.

That sentence is a “treaty” (as in: it’s not documentation fluff), “it’s the contract”, and I violated it by assuming “routed” meant “executed”.

Attempt #2: Use hooks to catch the system event and run the script

Then we tried to be clever. We said “Okay, cron emits an event, hooks listen to events… We’ll write a hook.”

So we built a hook pack, subscribed it to events, restarted the gateway (more than once to be honest), and got the hook to show as:

- ✓ Ready

- Events: message:systemEvent (and later

message)

We even instrumented it to dump the event envelope. But here’s the punchline: The hook never saw the cron system event.

It saw real inbound messages (webchat messages). That’s it.

And that when it hit us: cron systemEvents are not part of the hooks message stream…

They go somewhere else, where? to the “heartbeat” runner path.

This is the kind of thing that makes engineers swear at 2am!!! Not because it’s “bad” per se, but because it violates the mental model most of us carry:

“An event is an event”

But nope, not here, not in OpenClaw’s architecture: Different subsystems, different pipelines, different contracts.

Attempt #3: HEARTBEAT.md as a router

At this point we choose “peace” (the philosophical move): stop fighting the architecture, align with it.

If cron systemEvent is routed through heartbeat, then we should handle it in HEARTBEAT.md.

So we added a deterministic rule:

- If inbound text is exactly

automation:secure-backup, run the backup script with:OPENCLAW_MCP_SERVER=mcp-secure-backupOPENCLAW_BACKUP_KEEP=3

Also as we needed to indentify the encryption’s key ID so the MCP tool could get it, we moved the “secret UUID handle” to a protected file:

.path/backup-key-id(chmod 600)

We made this call so:

- no secret handles in cron payload

- no secret handles in hooks

- no secret handles in “agent memory” (which is basically a transcript waiting to be searched)

Next punch line: it still didn’t it reliably execute from the cron run…

Even with wakeMode=now, the cron job was “ok”, the reminder fired… and yet, nothing ran.

The conveyor belt was still running. Still no robot arm.

So what’s the conclusion?

Main-session systemEvent cron is not the right mechanism for reliable execution.

It’s for reminders and nudges, not for “guaranteed” automation.

The final solution: Isolated agentTurn cron job (the only one that actually it actually runs)

After reading the docs, we found that OpenClaw cron supports two payload kinds:

systemEvent(main session, routed through heartbeat)agentTurn(isolated session, executes an actual agent turn)

What we needed was the second one, so we created an isolated cron job:

--session isolated--messageinstructing the agent to run the script as a command (not “vibe it”)--no-deliverso it doesn’t spam the main chat- 3am schedule

- 1200s timeout (enough for the backup to finish)

Then we ran it manually once:

- It created a new encrypted backup file

- It pruned old backups automatically to keep last 3

Eureka! That’s what “working” looks like.

- Not “the run status says ok”

- Not “I saw a reminder line”

- A file exists, with a timestamp, and retention policy has executed.

Lessons learned (and why this is bigger than backups)

1) “Velocity is an illusion” when you don’t know the execution boundary

We went fast. We moved knobs. We restarted components. We patched config.

But we didn’t start with the question that matters:

What execution contract does this scheduler guarantee?

A scheduler that delivers reminders is not a scheduler that runs jobs. That’s not a bug. That’s a design choice.

2) For Open Claw a prompt is not an instruction, it’s a “treaty”

When a system says “routed through heartbeat”, your next question should be:

- Does heartbeat run automatically right now?

- Does it have permission to run tools?

- Does it run deterministically, or only when polled?

We treated that line as “FYI”, not as a strict policy.

3) Secrets belong in secret plumbing, not in “agent memory”

This one matters more as we add more secrets and more periodic tasks.

We made two critical moves:

- the encryption password is retrieved via a secure service

- the “secret identifier” is stored in a root-only file, not in cron definitions, not in chat messages, not in hooks

4) Hooks are powerful, but only if you’re listening to the right event stream

Hooks worked fine. They just weren’t the right interception point for cron system events.

The lesson isn’t “hooks are broken”, the lesson is: events are scoped.

Systems have boundaries, your automation has to respect those boundaries.

5) The scalable pattern: isolated jobs for real work, main session for human-facing summaries

If we’re going to add more periodic tasks, the architecture should look like this:

- Isolated cron job runs the real automation.

- Delivery mode is either:

none(quiet), orannounce(if you want a controlled summary)

This keeps your main session readable, and your automation reliable.

If you’re building similar automation, do this first

Before you schedule anything, answer these questions:

- What does the scheduler execute?

- A “reminder”?

- A “turn”?

- A script?

- Where does the payload route?

- Hooks?

- Heartbeat?

- A separate execution engine?

- What is the artifact that proves success? (in ths case)

- A file exists

- A checksum matches

- A retention policy pruned

- Where will secrets live?

- Not in code

- Not in prompts

- Not in transcripts

- In secret plumbing

Build the pipeline. Then let the AI drive.

That’s again an example of the “Tony Stark” mindset I talk about: define the parameters, constrain the tools, and let the machine do the “noise”.

Because if you don’t… You’ll have a cron job that says “ok”, a reminder that fires on time, and exactly zero backups when you need them most.

For now, we have completed our objective of restoring Open Claw to previous satates or from “broken” configurations and also enabled a roubst and secure way to ask Open Claw to do “important” things for us but without giving away our precious secrets.

You can find the Backup MCP Tool and the Secrets Retrieval Service on my github.